In November 2025, the Ox Security research team started knocking. Six months and more than thirty disclosure rounds later, they have ten CVEs, nine successfully poisoned MCP marketplaces, an estimated 200,000 vulnerable servers in the wild, and one consistent answer from Anthropic: the protocol works as expected.

— Ox Security researchers, via The RegisterOne architectural change at the protocol level would have protected every downstream project, every developer, and every end user who relied on MCP today. That’s what it means to own the stack.

This is the bit where Anthropic is supposed to own the stack, and instead is choosing not to.

What Ox Found

The vulnerability sits in MCP’s STDIO transport. An AI application spawns an MCP server as a subprocess by passing a command, args, and environment. There is no allowlist, no sanitization, no capability scoping. The reference SDK hands the string to the OS and trusts the caller to be careful. This pattern is replicated across every official SDK Anthropic ships: Python, TypeScript, Java, Rust.

Ox catalogued four distinct ways this turns into RCE:

- Direct command injection. Any AI framework with a publicly facing UI exposes arbitrary command execution. LangFlow (all versions) was reported in January and still has no CVE. GPT Researcher is tracked under CVE-2025-65720 with no patch.

- Hardening bypass. Upsonic (CVE-2026-30625) and Flowise (GHSA-c9gw-hvqq-f33r) tried to allowlist commands like

python,npm,npx. Ox bypassed them withnpx -c <command>. Allowlists at the app layer are leaky exactly the way you would expect. - Zero-click prompt injection across IDEs. Windsurf, Claude Code, Cursor, Gemini-CLI, GitHub Copilot. All five are vulnerable; only Windsurf has a CVE (CVE-2026-30615). Anthropic, Google, and Microsoft each told Ox the IDE class was “a known issue, or not a valid security vulnerability because it requires explicit user permission to modify the file.”

- Marketplace poisoning. Ox submitted a benign proof-of-concept MCP that wrote an empty file. Nine of eleven marketplaces accepted it, including platforms with hundreds of thousands of monthly visitors.

Ten CVEs. 150 million package downloads in scope. Four orthogonal exploit paths. One protocol.

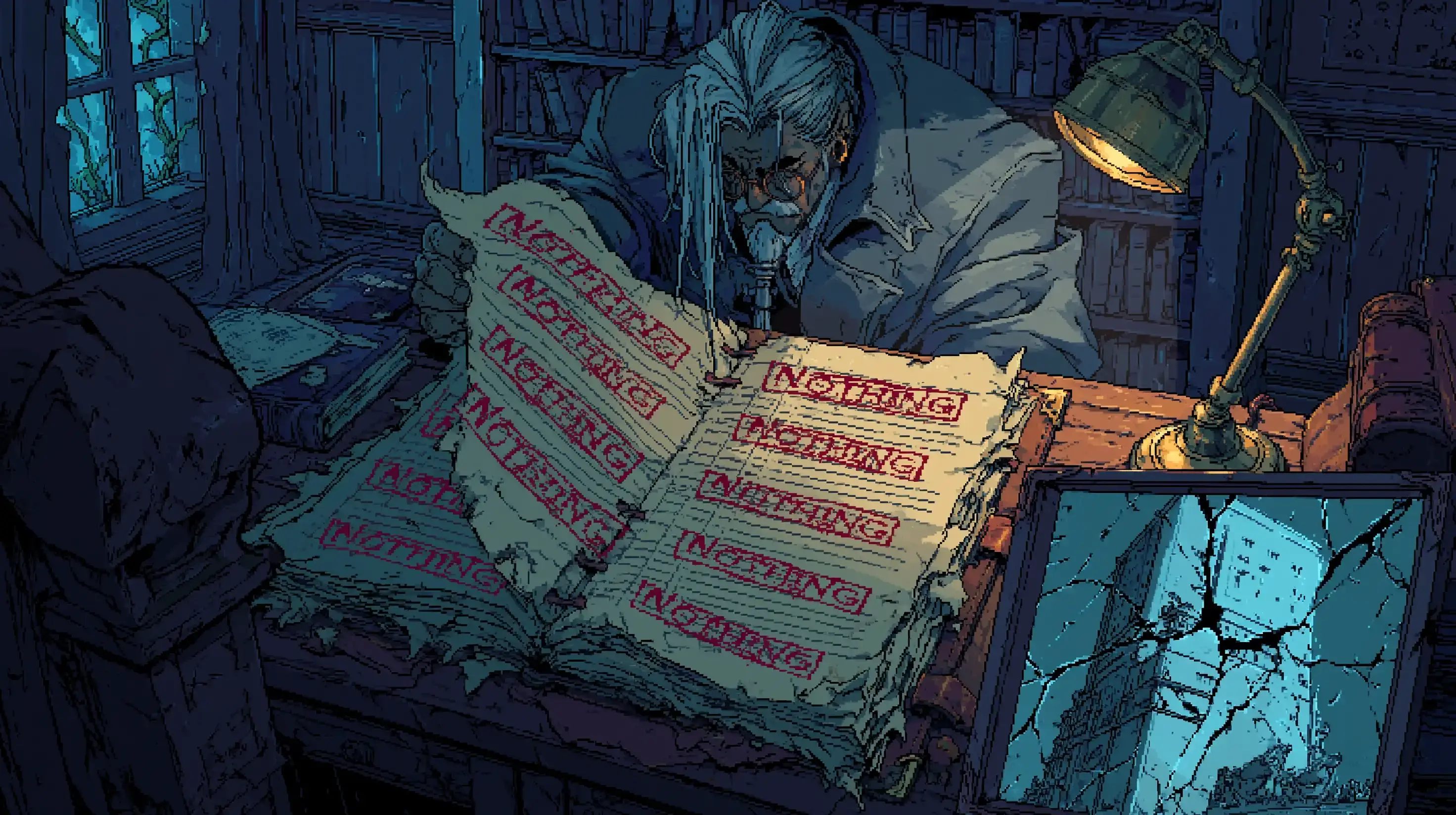

”Expected” Is the New “Won’t Fix”

The Register pinned down the language. Anthropic, per Ox’s account, “declined to modify the protocol’s architecture, citing the behavior as ‘expected.’” A week after the initial disclosure, Anthropic quietly updated its security guidance to say MCP STDIO adapters “should be used with caution.” Ox’s response in a 30-page follow-up paper:

— Ox Security follow-up, April 2026This change didn’t fix anything.

It didn’t. A documentation note is what you ship when you can’t ship a patch. It transfers the responsibility of remembering the warning onto every developer who touches the SDK from now until forever, and every developer those developers ship to. The half-life of a security note in a README is whatever you measure between the day it appears and the day someone copy-pastes a quickstart that doesn’t include it.

Anthropic did not respond to The Register’s questions for that story. They have not, as of this writing, published a first-party blog post about the disclosure, the four exploit classes, or the path forward at the protocol level.

The “Transport, Not Boundary” Defense

There is a steelman, and it deserves an honest read. STDIO is, technically, a local process-to-process transport. The MCP spec already says STDIO SHOULD NOT use OAuth and uses environment credentials. The implicit security model is “you trust what you launch.” The npm postinstall lineage has lived with this exact tradeoff since 2010, and nobody is calling for npm to die.

Two things separate MCP from the npm parallel.

The first is that Anthropic is not selling MCP as an opt-in package format. They are pitching it as the universal standard for AI tooling: the bus that LLMs, agents, IDEs, and external systems all converge on. You cannot be the universal standard and disclaim systemic risk. Ecosystem leverage is ecosystem responsibility.

The second is that we already ran this experiment in another lifetime, and the answer was “fix it in the driver.” SQL injection started as “sanitize at the application layer.” For about a decade, that was the prevailing wisdom and the prevailing class of catastrophic breach. The class did not disappear because developers got better at sanitization. It mostly disappeared because parameterized queries became the default, in the driver, baked in. The standards body in this analogy is the SQL spec, and the SQL spec eventually walked the posture back.

MCP is choosing the 1999-era posture. That is a choice, not a law of physics.

The Downstream Conscript

The fix exists. It’s just not in the SDK.

LiteLLM shipped CVE-2026-30623 with a hard allowlist limited to npx, uvx, python, python3, node, docker, deno. DocsGPT patched. Bisheng patched. Each maintainer is independently rediscovering the same fix, mounting it on top of a protocol that should have done the work once. This is the failure mode: the protocol pushes a security obligation onto tens of thousands of downstream developers, most of whom are not security engineers, and we measure progress in CVEs filed per quarter.

This is not what “secure by default” looks like. It is what “secure by your weekend” looks like.

Don’t wait for the SDK. Fork the LiteLLM allowlist pattern into your server. Treat the command parameter as untrusted input, even when it comes from your own application: hardening bypasses prove the call site is not enough. The protocol won’t help you and the documentation update is not a control.

The Quieter Fix: Don’t Use It

There is a simpler workaround a lot of teams already chose. I argued in November that for most internal use cases the right answer is a library and a CLI, not an MCP server. Bash, scripts, and a few well-named utilities are observable, sandboxable, version-controlled, and don’t drag a vulnerable transport layer along with them. An agent with a shell and a --help flag outperforms the same model staring at thirty tool schemas.

It’s not always an option. MCP is the correct abstraction when the tooling lives across a process boundary you don’t control: an editor talking to third-party SaaS, a browser session, a remote dev environment. You can’t bash-script your way out of those. But every MCP server you ship in places where you could have shipped a script is a deliberate choice to inherit the protocol’s posture, including the parts Anthropic just declined to fix.

If your MCP server could have been 200 lines of code and a flag, it probably should have been.

The Asymmetry

Anthropic gets to define MCP as transport, not boundary. Anthropic gets to ship the reference SDK in four languages without sanitization. Anthropic gets to refuse the architectural change, point at the README, and call it done. And Anthropic also gets to keep telling the industry that MCP is the convergence point, that everything plugs into it, that this is the future shape of AI tooling.

That’s the free option. The protocol is a standard when standardization helps, and a transport when responsibility shows up.

The Claude Code line in the IDE list is the part that should land internally. An Anthropic product is in the vulnerable column, and Anthropic’s published response to that class was “known issue, not a valid security vulnerability because it requires explicit user permission to modify the file.” Permission models that route through “the user clicked a thing” are not a defense; they are the entire surface that prompt injection eats. We’ve been writing posts about that for a year.

The Pattern

This is not the first entry in this ledger and it won’t be the last. The cache bug, the source leak, the April 23 postmortem, now MCP. The shape repeats. A specific failure mode shows up. The first response is to disclaim it, characterize it, or push it down the stack. The architectural fix arrives later than it should, or doesn’t arrive. The trust deficit compounds, slowly and quietly, in the gap between “we ship the standard” and “we own the spec.”

It is not malice. It is “we’ll fix it at your layer.”

If MCP is the bus for the next decade of AI tooling, the bus needs a parameterized query. Until then, every npx -c that lands in a JSON config is a working exploit, and every README warning is somebody else’s homework.

That’s not what it means to own the stack.